As of April 2026, the top Speed Player iOS options are VLC for Mobile for its wide format support, KMPlayer for high-quality 4K UHD control, and Music Speed Changer for those who need to adjust pitch independently. Whether you are looking for 0.1x slow-motion or 4.0x fast-forward, these apps provide the most reliable performance for iPhone and iPad users today.

How to Choose the Best Speed Player iOS App: Our Evaluation Criteria

Picking a great media player in 2026 involves more than just checking for a “play” button. To find the most effective Playback Speed Control tools, we look for apps that can handle modern, high-bitrate files without lagging or stuttering. A high-quality player should offer a wide speed range—ideally from 0.1x for frame-by-frame analysis to 4.0x for skimming content—all while keeping the audio perfectly in sync.

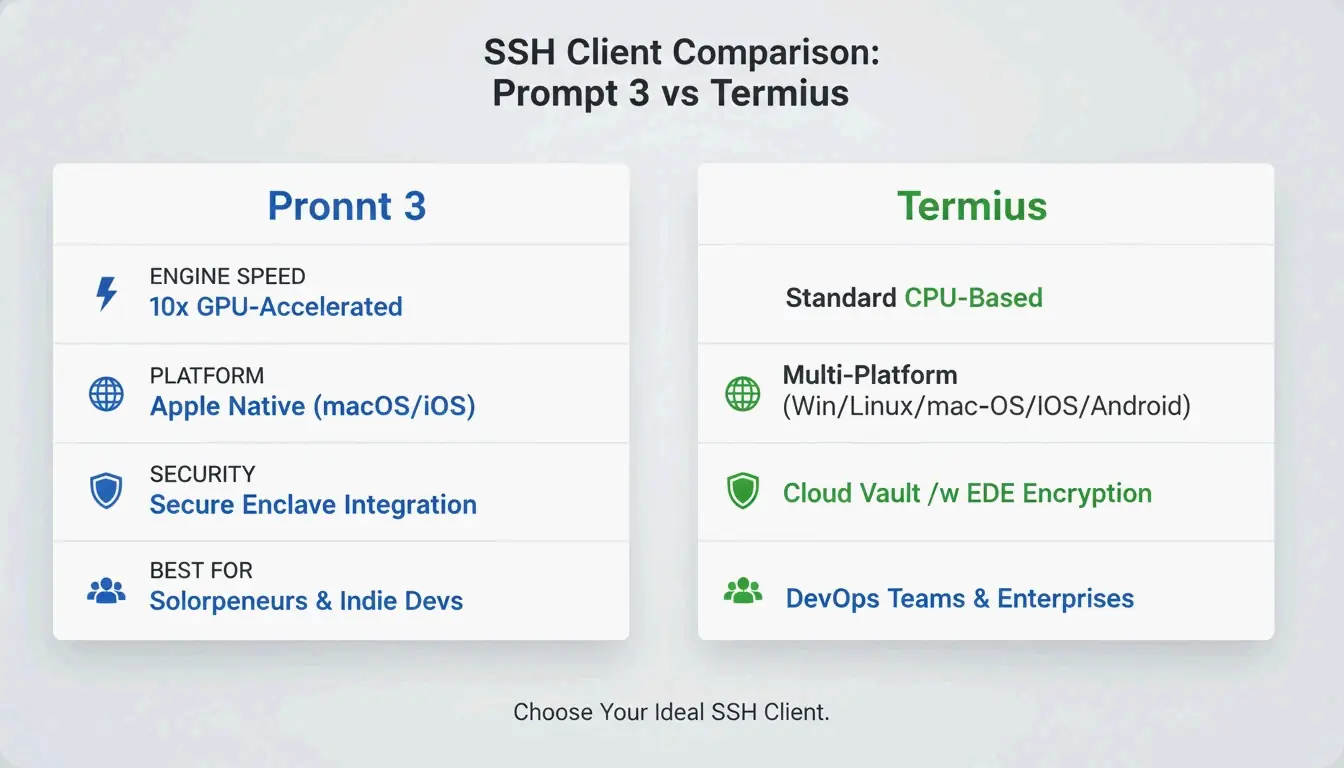

We evaluate these apps based on four main factors:

- Speed Range and Precision: Can you adjust the speed in small steps, like 0.01x or 0.05x?

- Format Compatibility: Does it have native support for Video Formats (MKV, MP4, AVI, MOV)? This saves you from the headache of converting files.

- Interface Efficiency: Are there easy gesture controls, like long-pressing for 2x speed or swiping to change the volume?

- Hardware Optimization: Does the app use iOS hardware acceleration so that watching 4K or UHD video doesn’t kill your battery?

The Importance of Precision Playback in 2026

By 2026, being able to control playback precisely has become a must-have for many professionals. Whether you’re a developer checking a screen recording, a student trying to get through a three-hour lecture, or a sports coach analyzing 240fps footage, the ability to scrub through video with millisecond accuracy is a major productivity booster.

Top Picks: The Best iOS Video Players for Speed Control

These apps are currently the best options on the App Store for video playback, covering everything from casual watching to professional-grade editing.

VLC for Mobile: Universal Format King

VLC for Mobile is still the go-to choice if you need to play almost any file type. It’s a free, open-source app that lets you sync files directly from services like Google Drive, Dropbox, and iCloud. As noted by Wondershare UniConverter, VLC is a top recommendation for opening MKV, AVI, and FLV files without needing to convert them first. Its speed slider is very stable, and it keeps the audio pitch natural even when you’re watching at high speeds.

KMPlayer: The UHD and 4K Specialist

If you mainly watch high-definition movies, KMPlayer is a great choice for 4K, UHD, and 3D content. The Softonic Editorial Team points out that KMPlayer’s biggest strength is its support for a massive range of codecs that the standard iOS player just can’t handle. It also includes “KMP Connect,” which lets you stream videos from your PC to your iPhone. It’s well-optimized for the latest iPad Pro and iPhone screens, so 4K video stays sharp even at 1.5x or 2x speed.

MX Player: Mastering Gesture Controls

MX Player is famous for its smooth, gesture-based interface. You can change the playback speed, volume, and brightness just by swiping or tapping the screen. For example, a simple long-press can jump you straight to 2x speed—a feature that PhoneArena mentions was a staple in third-party players long before it showed up in native apps. This makes it a perfect “one-handed” player for people on the go.

Infuse: The Cinephile’s Speed Player

For those who want a premium, “Netflix-style” look for their personal video library, Infuse is the standout. It offers great subtitle support and syncs perfectly with the cloud. Infuse is built for movie lovers who have large collections of MKV and MOV files but still want the flexibility to speed up a slow documentary or slow down a foreign film to catch every word of the dialogue.

According to the App Store listing for Video Player – All in One, which has a 4.6-star rating from over 7,200 users, people today expect “all-in-one” features like Picture-in-Picture (PiP) and Wi-Fi sharing alongside their speed controls.

Gesture Cheat Sheet: Triggering Speed Changes Instantly

Getting things done quickly on iOS usually means using fewer menus. Most top speed players in 2026 use “hidden” gestures to keep the screen uncluttered.

- Long-press for 2X: In apps like Video Player – All in One, holding your finger anywhere on the screen during playback instantly switches to 2x speed—great for skipping through the “fluff” in a video.

- The Slider vs. The Tap: VLC uses a traditional slider for 0.25x to 4.0x speeds. However, newer apps like VideoSpeed offer 0.05x increments, which is perfect if you feel 1.25x is too slow but 1.5x is a bit too fast.

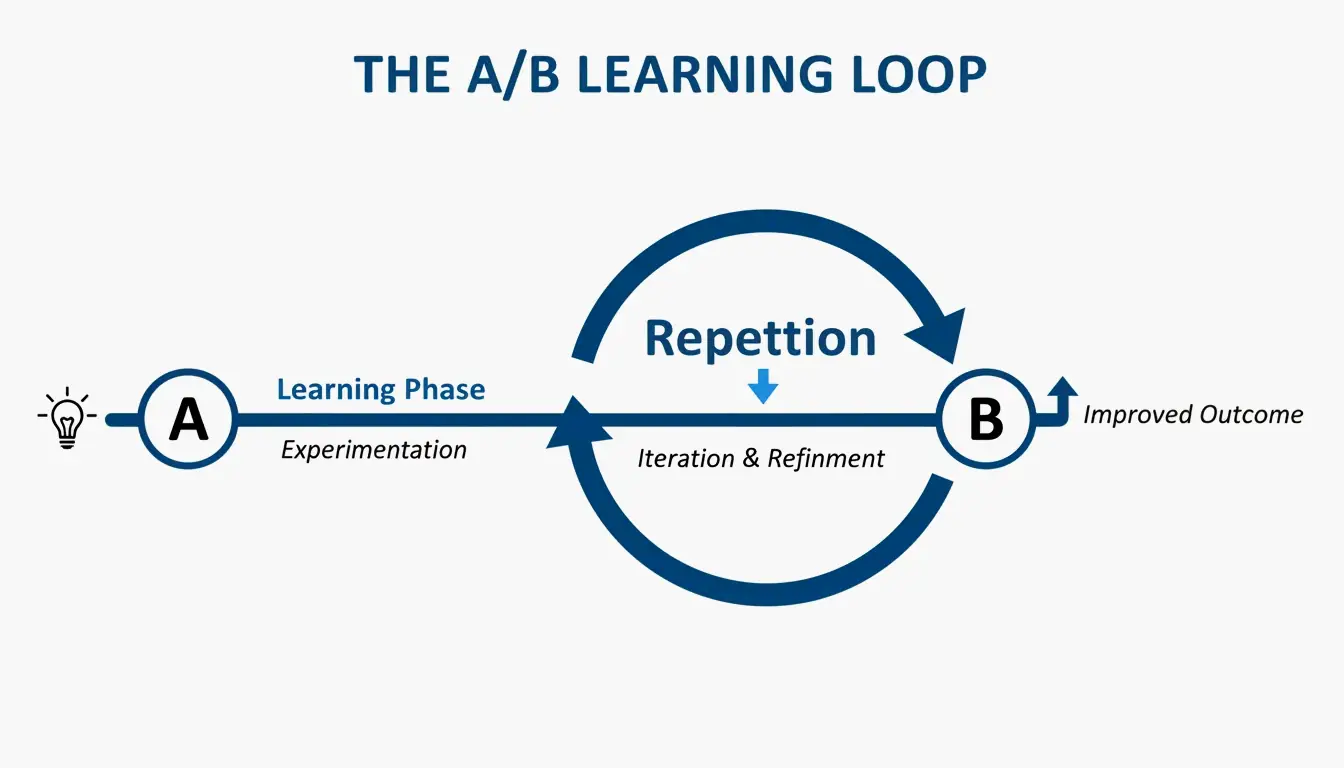

- A/B Loop for Mastery: The A/B Loop is a must for learning. You set a “Point A” and “Point B,” and the player repeats that section. Musicians and dancers often use this to practice a specific move at 0.5x speed before trying it at full speed.

Specialized Use Cases: Musicians and Productivity Pros

Some apps go beyond just watching video and use speed control to help you learn new skills or process information faster.

Music Speed Changer: Independent Pitch and Tempo

Standard players often distort the sound when you change the speed. Music Speed Changer fixes this by letting you adjust the tempo and the pitch separately.

- Example: A guitar player can slow a solo down to 0.25x to catch every note while keeping the song in the original key. On the flip side, a singer can change the key of a track without changing the speed at all.

RSVP Technology for Productivity

If you want to “read” a video or document at high speed, RSVP Technology (Rapid Serial Visual Presentation) is a game-changer. Apps like RSVP Reader show words one at a time in the same spot on the screen. This stops your eyes from moving back and forth, allowing you to read 500 to 1,000 words per minute (WPM). This is a huge help for reviewing transcripts or scripts while traveling.

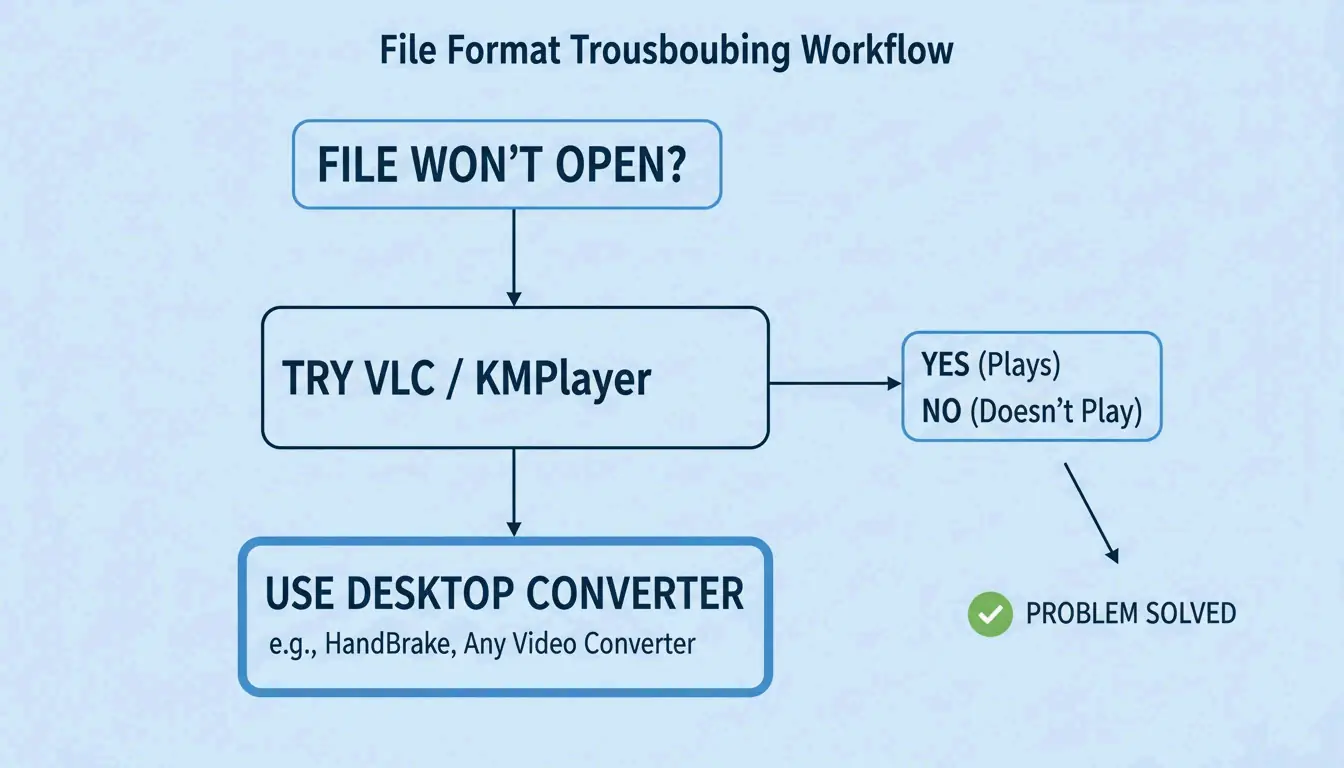

How to Play Incompatible Formats on Your iPhone

Even in 2026, you might find files that the basic iOS Photos app won’t open. You have two main ways to fix this:

- Use a Third-Party App: Download a player like VLC or KMPlayer. These apps have their own built-in “codecs,” so they can play MKV, AVI, and MOV files directly without you having to convert them.

- Convert on Your Computer: If you have a huge library of 4K MKV files, using Wondershare UniConverter on a PC or Mac is often the most reliable way. It can turn over 1,000 different formats into iPhone-ready MP4 files very quickly, which also helps save your battery life.

Once your files are ready, you can move them over using AirDrop, Google Drive, or a USB-C cable.

Conclusion

The right Speed Player iOS app depends on your specific needs: VLC is great for general format support, KMPlayer excels at high-definition video, and Music Speed Changer is the best for audio precision. For most people, VLC for Mobile is the best free all-around choice. However, if you’re a musician or dancer, Music Speed Changer is definitely worth the download so you can practice without the music sounding distorted.

FAQ

Can I change the playback speed of videos in the native iOS Photos app?

The native iOS Photos app has limited functionality; it only supports speed adjustments for “Slo-mo” videos captured directly on the iPhone. For standard recorded videos or imported files, the app does not provide a speed toggle. You must use an editor like iMovie or a dedicated third-party player like VLC to control playback speed.

Which iPhone video player supports 4K Ultra HD without draining the battery?

KMPlayer is highly optimized for 4K and UHD playback, utilizing hardware acceleration to reduce the load on the CPU. Infuse is another excellent premium option that offers efficient decoding specifically designed to preserve battery life during long-form, high-definition viewing sessions on iPad and iPhone.

Is there an app that changes audio pitch independently of playback speed?

Yes, Music Speed Changer is designed specifically for this purpose. It allows you to shift the pitch by up to ±12 semitones while keeping the playback speed constant. This makes it an ideal tool for musicians who need to practice a song in a different key without it slowing down or speeding up.